The mistake was made in mid-April 2026, and it was a small one in the way that wrong things made quickly tend to be small: a single failed API call, a glance at an error message, a conclusion that the feature did not work, and a release note shipped in v1.1 of the great-filmmakers-plugin declaring it so. The release note routed users away from the feature. The plugin's documentation followed the release note. By the time the writer at the desk noticed something was off, the wrong claim had been live for about a week.

The feature in question was reference images on Veo 3.1 Fast preview — the ability to send up to three character reference stills with a video generation request, so a model in shot one and a model in shot four read as the same person. The plugin had been built for a trilogy short film. Character continuity was not a luxury for that work; it was the assignment. So the claim that reference images were not supported on the Gemini API tier mattered, and the fact that the claim was wrong mattered more.

The probe that misled

What the writer did, in mid-April, was run a single probe. He read a section of Google's docs page, copied a request shape from an example that turned out to be misleading, set the duration to four seconds because four seconds was what his text-only prompts had been using, set an aspect ratio he had been getting away with elsewhere, attached two reference stills, and sent the request. The API returned an error. The error was the kind of message a tired engineer reads and stops reading:

"Your use case is currently not supported."

He concluded the feature was unsupported. He wrote that in the release notes. He shipped v1.1.

The trouble with the probe was not that it failed. The trouble was that it varied three things at once. The request shape was wrong: he had wrapped the reference stills in an inlineData envelope copied from a stale read of a docs example, and the API rejects that envelope; the correct shape is a flat object with bytesBase64Encoded, mimeType, and a referenceType field set to the lowercase string "asset". The duration was wrong: Veo 3.1 silently rejects four- and six-second clips when reference images are attached and accepts only eight. The aspect ratio was wrong: with reference images present, Veo silently rejects ratios other than 16:9. Three constraints, one error message, no isolation.

To say the feature was unsupported on the basis of that probe was, strictly, to say that some combination of those three constraints was unsupported. The probe could not say which. It said only that the request, as posed, did not render.

What v1.1 told the user

The v1.1 release notes for great-filmmakers were specific in a way that made the mistake worse. They did not hedge. They did not say we could not get reference images to render in our test. They said the feature was unusable on the Gemini API tier and routed users to a workaround the plugin called inline character anchoring — a technique that bakes character description into the text prompt itself, scene by scene. Inline anchoring works, after a fashion, but it is a thinner instrument. It cannot do what a reference image does, which is hand the model a face and a wardrobe and say this person, again.

So users of the plugin in the week after April 18 were quietly steered away from the better tool toward the lesser one, on the writer's authority, on the basis of a probe that had varied three things at once.

The pushback

The correction came from a reader. He pointed at research suggesting reference images did, in fact, work, and he linked to a forum thread on Google's developer forum titled, with a precision the writer would later quote in his own notes:

"Veo 3.1 Reference Images: Docs Say Available, API Says Not Supported."

The thread, at discuss.ai.google.dev/t/veo-3-1-reference-images-docs-say-available-api-says-not-supported/111853, was the canonical evidence. It contained two things the writer's probe had lacked. The first was a Google staff confirmation that reference images required 16:9. The second was a working request body posted by a community member, with a flat bytesBase64Encoded shape and a referenceType of "asset". Both pieces of evidence had been on the public internet during the week the wrong claim was live in the plugin's docs. Neither had been consulted before shipping.

The writer re-tested on April 26, 2026.

The corrected probe

He used the same model — Veo 3.1 Fast preview. He fixed the request shape: flat object, bytesBase64Encoded and mimeType at the top level, referenceType: "asset", lowercase. He fixed the duration: eight seconds. He fixed the aspect ratio: 16:9. He attached two character reference stills from the trilogy short. He sent the request.

It rendered. First try.

The render came back at fifty-eight seconds. The two characters in the clip were recognizably the two characters in the reference stills. Wardrobe held. Faces held. The thing the plugin's documentation had said could not be done had just been done, on the same API tier, by the same writer, at the same desk, with three constraints corrected one at a time.

What the corrected probe demonstrated was not that Veo 3.1 supported reference images — the docs and the forum had said as much — but that the original probe had proved nothing about Veo 3.1. It had proved that one or more of three independently varied constraints was wrong. The correct response to that result was a multi-axis probe that held two constraints fixed and varied the third. The response actually given was a release note declaring the feature unsupported. The two responses are not similar.

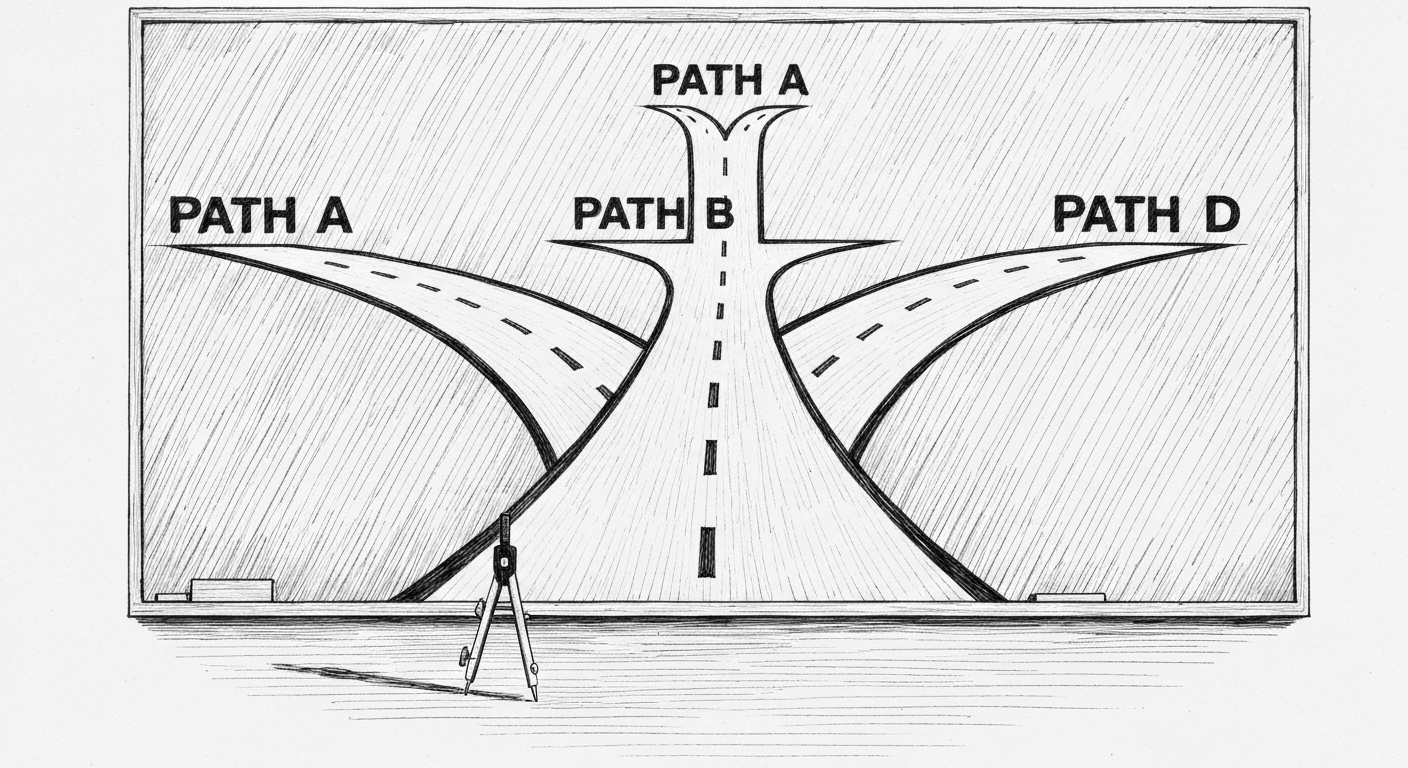

Four paths, now documented

v1.4 shipped today. It corrects the documentation and, while doing so, expands the render paths from two to four. The expansion came out of a head-to-head shootout the writer ran across three services using a single composition from the trilogy short — a wide on three figures — plus a ten-shot full re-render of the trilogy through one of the new paths.

The four paths now sit in the plugin's documentation as a decision matrix:

| Path | Service | Durations | Best for |

|---|---|---|---|

| A (default) | Veo 3.0 Fast text-to-video | {4, 6, 8} | Multi-shot stylized series |

| B | Veo 3.1 Fast preview + reference images | {8} | Multi-character continuity |

| C (new) | Kling 2.5 Turbo image-to-video | {5, 10} | Single cinematic clip from an art-directed still |

| D (new) | Leonardo Motion 2.0 image-to-video | {5} | Atmospheric B-roll, no character identity |

The shootout produced three findings the writer wrote into the brain vault under learnings/video-gen-services-comparison.md. The first: all three services finish a short clip in fifty to seventy-five seconds. Speed is no longer a differentiator. The second: on a single shot, Veo 3.1 and Kling 2.5 Turbo are roughly tied on quality. The writer's note in the comparison file reads, plainly, "hard to tell which was which." The third, and the one that mattered for the trilogy: at project scale, Path A still wins.

The reason Path A still wins for series work is structural. Text-to-video keeps the composition consistent across shots because each shot is rendered from the same prompt grammar, the same instruction about framing, the same negative space. Image-to-video pipelines — Kling, Leonardo Motion — inherit composition flaws from the source still. The writer learned this in a way only a stress test can teach: he re-rendered the full ten-shot trilogy through Kling, using stills produced by a separate image generator. The Kling clips came back beautifully animated and subtly wrong. The figures read as grounded in a stage floor, because the source stills had introduced floor surfaces the original Veo text-to-video would have rendered as void. The composition error had been baked into the still and Kling had honored it.

Leonardo Motion 2.0, at five cents a clip, has its own documented limitation. Figures drift. A character asked to hold pose will, over five seconds, shift unnaturally. The writer logged the drift in the same comparison file. Path D is in the matrix not because Leonardo Motion can do what Veo can do but because, for atmospheric B-roll where character identity does not matter, five cents a clip is five cents a clip.

Kling 2.5 Turbo, by contrast, costs about a dollar per five-second clip. The trilogy re-render through Kling cost real money and produced a real lesson, which is what the writer wrote down.

What the brain vault now remembers

The meta-lesson from the wrong probe is unglamorous. When an API rejects a request that has multiple silent constraints, a single failed call proves nothing about the feature. It proves at most that some combination of constraints is wrong. To honestly conclude that a feature is unsupported, the engineer must vary one constraint at a time and hold the others fixed, until the failing axis is isolated. Otherwise he has combined N wrong things and concluded the feature itself is missing — which is what the writer did, and which is what shipped in v1.1.

The corollary, for public-facing claims, is harsher. Do not ship feature X does not work in documentation without one of three things: a Google staff confirmation, a multi-axis probe that varied each constraint independently, or a forum search that turned up a community-confirmed working request. The v1.1 release notes failed all three tests. The forum thread that would have prevented the mistake was already on the public internet on the day v1.1 shipped.

The writer wrote the meta-lesson into the brain vault as learnings/veo-3-api-constraints.md. The file lists the three silent constraints that misled the original probe, the corrected request shape with the lowercase "asset" string, the eight-second duration requirement, the 16:9 aspect ratio requirement, and the forum thread URL. A companion file at learnings/image-gen-tier-system.md documents the upstream stills pipeline that feeds Paths C and D, so future shootouts start from a clearer baseline.

The brain vault is the part of the system the writer cares about most when he is writing about a mistake. It is where the next probe gets to start. The trilogy of plugins described in Three Shapes of the Same Pattern and the autonomous office described in The Office That Worked While He Was Out both depend on a brain vault that does not let the same wrong conclusion ship twice. v1.4 of great-filmmakers is now in the documentation. The forum thread is in the documentation. The four paths are in the documentation. The lowercase string "asset" is in the documentation. So is the eight.

Seth Shoultes builds things at garagedoorscience.com and writes about them occasionally.