There is a specific kind of frustration known to the AI operator: the Dig. It begins when a model produces a response that is almost right, but structurally flawed, or precisely wrong in a way that feels like a simple misunderstanding. The operator's response is to add a constraint. “That’s good, but make it more concise.” Then another. “Now remove the introductory paragraph and use a bulleted list.” Then a third, more desperate plea. “I explicitly asked for no adjectives; please follow the instructions exactly.”

This is the Digging Fallacy. It is the belief that the desired output is buried slightly deeper in the current conversation, and that with a more precise shovel—a more granular prompt, a more restrictive set of constraints—it can be unearthed. The operator becomes obsessed with the wording of the prompt, treating the interaction as a negotiation with a ghost. They believe they are refining a request. In reality, they are merely adding noise to a failing channel.

The Geology of the Model

To understand why digging fails, one must stop viewing the Large Language Model as a monolithic intelligence and start viewing it as a map of probabilistic terrains. A model does not "know" things in the way a human does; it possesses a set of distinct registries of behavior—what we might call channels.

Prompting is not the act of asking a question; it is the act of steering the model into a specific channel. When you tell a model to “act as a senior software engineer,” you are not just giving it a role; you are selecting a frequency. You are shifting the probability distribution of the next token toward a specific cluster of training data—one characterized by technical rigor, a preference for efficiency, and a specific linguistic register.

Once the model is locked into a channel, you can refine the output. You can ask it to be shorter or longer, to change the tone from formal to casual. But you cannot fundamentally change the nature of the channel from within. If the model has entered a "Chatty" channel—a registry of behavior that prizes politeness and filler over density—no amount of “be concise” prompting will ever be as effective as simply switching to a "Clinical" channel.

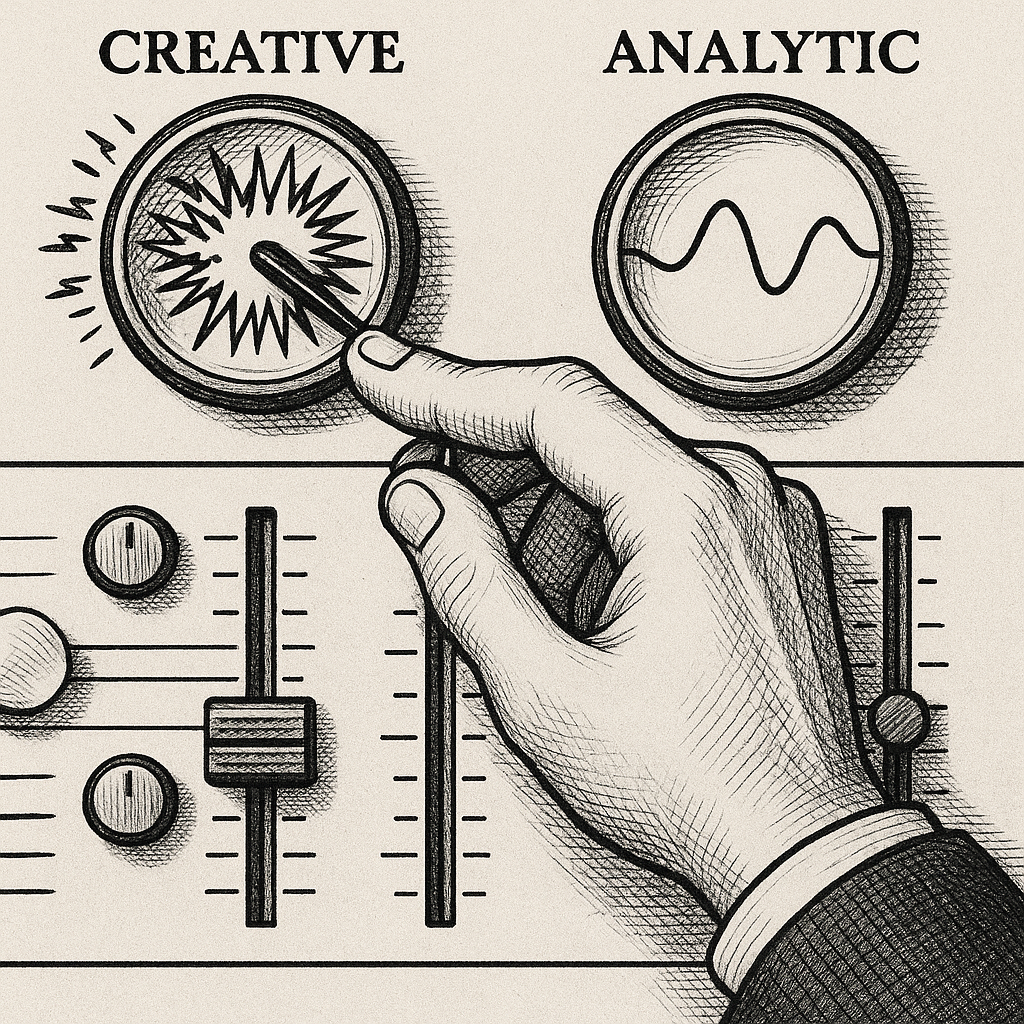

The operator who digs is trying to force a "Creative" channel to act with "Analytic" precision. It is like trying to use a watercolor brush to etch a circuit board. You can press harder, you can refine your stroke, you can add more constraints to the brush—but the tool is fundamentally mismatched to the task. The failure is not in the prompting; it is in the route.

The Cost of the Dig

Digging is not merely inefficient; it is actively destructive. Every refinement, every "please do it this way instead," adds to the context window. In a short session, this seems negligible. But as the dig deepens, the context window becomes cluttered with the history of failure. The model is no longer just processing your request; it is processing a record of its own inability to meet that request.

This creates a feedback loop of degradation. The model begins to attend more to the constraints—the “do not do X” and “make sure to do Y”—than to the core objective. The signal-to-noise ratio collapses. By the fifth single-minded refinement, the model is often so constrained by the operator's desperation that it loses the ability to be creative or coherent. The aphasia of the over-prompted model is a direct result of the dig.

The cognitive load shifts from the task to the tool. The operator stops thinking about the problem and starts thinking about how to trick the model into solving the problem. This is the point where the work ceases to be intellectual and becomes clerical.

The Three-Strike Rule

The professional operator recognizes the difference between refinement and digging. Refinement is the act of polishing a result that is qualitatively correct. Digging is the act of attempting to fix a result that is qualitatively wrong.

To avoid the dig, one must employ the Three-Strike Rule: If you have refined the prompt three times and the core failure persists, the channel is wrong.

The protocol for the switch is simple: stop negotiating. Do not add another constraint. Do not explain why the previous response was wrong. Instead, change the lens. If the "Creative" persona is hallucinating details, switch to the "Researcher" persona. If the "Generalist" is being too vague, switch to the "Technical Architect."

This is not "prompt engineering" in the popular sense of finding the magic word. It is frequency management. You are acknowledging that the current probabilistic path is a dead end and choosing to start over on a different path. The most efficient path to a result is rarely a more detailed prompt; it is almost always a change in the identity of the agent.

Selecting the Frequency

When you switch channels, you are not just changing a label; you are changing the a priori assumptions the model makes about the world. An "Analytic" channel assumes that precision is the primary virtue and that brevity is a secondary benefit. A "Creative" channel assumes that novelty is the primary virtue and that precision is a constraint to be played with.

The shift from digging to switching transforms the operator's role. You are no longer a negotiator pleading with a ghost; you are a dispatcher routing a problem to the correct specialist. You stop asking, “How can I make the model understand me?” and start asking, “Which registry of behavior is best suited for this specific output?”

The result is a dramatic reduction in token waste and a profound increase in velocity. The work moves from the friction of the dig to the clarity of the switch. The aphasia vanishes, the context window stays clean, and the output arrives not through force, but through alignment.

Stop digging. Switch the channel.